Two business analysts. Two web developers. Two cyber security analysts. One data analyst. And one IT architect / technologist. We were all in an EMC class on Data Science and Big Data Analytics.

It is challenging but it was most enlightening for me. It is challenging because of the depth of statistics, programming, business domain that one has to know. But for the technologist, it sheds ‘true’ light into what data science deals with. It helped me understand what data scientists really need to know to churn out those ‘predictive analytics’ that we, as technologists, so casually talk about. Although this is probably level 101 in data science world, my appreciation for this domain has increased many folds.

Here’s Mike’s Take on why the Digital Technologist must scale this data science learning curve.

From 30,000 feet. It’s all about the data science life cycle. It’s about conceptualizing the business problem into a statistical one. It’s about knowing which data and variables to include in the analysis, or not. It’s about knowing which statistical model would give you the outcome you need. It’s about testing your predictive model and operationalizing it.

The Business Challenge. We were tasked to develop a predictive analysis model, for a housing loan application system. The model is supposed to generate a rating for online loan applicants based on information like gender, income, ethnicity, loan amount, geographic location etc. Normally, financial institutions will just perform a ‘credit-check’ and revert to the applicant. This typically takes days. But this online predictive service provides an immediate, rating to the applicant.

1. Data Preparation. We were given the actual Home Mortgage Disclosure Act (HMDA) data from the US. There were close to 20-odd variables – from loan purpose, to application actions in the past, to loan type, geographic location, etc. The key task of the data scientist here is to clean the data – ensuring it is in the right format, decide if noisy variables should be considered in the model, determine if there are multiple models. This is where the data scientist needs to have some knowledge about housing loans to make sense of the data.

2. Selecting the Data Set. The density plot shows that the data consists of two models with statistically separate behaviors that must be analyzed separately for accurate prediction. I am understating this. Really. One of our classmate spent a lot of time manipulating his model at later stages, only to discover that a noisy variable was included in the analysis.

3. Building the Model. We then had to define the outcome we intend to achieve, and select the right statistical model to achieve that outcome. During the theory part of the class, we were introduced to popular clustering, association and classification techniques, and their associated outcomes. K-means, linear regression, logistic regression, Naïve Bayesian… don’t worry, the class will bring you up to speed. Two models were eventually used – Logistic Regression and Decision Tree. In an unrelated lab practice, we had the chance to build market basket analysis of a grocer using association rules. Simply too cool.

4. The Model. The final outcome was a series of regression coefficients that represent a prediction function. With the associated input from an online applicants, the function will calculate the likely probability of a successful application. This came from the Logistic Regression model. This is the output of this analytics and data science exercise.

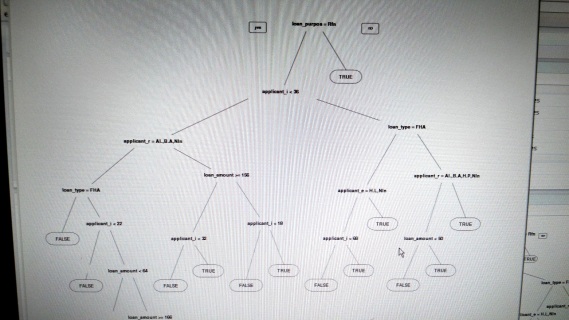

The Decision Tree produced a predictive decision tree, that served the same outcome – generate a prediction outcome based on applicant’s input.

5. Operationalizing with Continuous Development. While we didn’t cover this, but the output of the predictive model (coefficients and decision logic), will then be used as the algorithm behind the “online loan prediction system”. And as more data is being collected, the data scientist would continue to assess the existing model, and refine it and make changes to it. Can you then imagine the need for a DevOps + PaaS capability to keep up with the changes on the coding side? Can you imagine the need for data science skills in every business and organization?

The above is just a snippet of the whole experience. And this class merely exposes the tip of the data science and analytics world. The learning here was the clarity in which a business problem and the desired outcome was translated into a statistical model with actionable outcome. So, whether you are an end user or a vendor, this is a fantastic place to start to begin to understand data science.

Check out the class : https://education.emc.com/guest/campaign/data_science.aspx

Hey Michael, nice summary of your class on data science

LikeLike

Hi Mike, just curious to know what was the noise in the data and how did identify it ? thanks Vik

LikeLike

It was a simple situation. We were supposed to generate a model that predicts for a potential home owner if he or she is eligible for a loan. So the data given to us to build the ML model contains a lot of variables. And like all predictive models, not all variables are relevant; in fact some will confound the model. So the noise was really the other variables. There were over 20 variables or data sets that were captured per home owner. And so using simple statistical tools like log density and even merely doing ROC and AUC (if my memory serves me well), we began eliminating variables that were of no significance. We finally narrowed down to 3 variables I think. So with that we had our learning data (historical data) to build the model.

LikeLike

thanks Mike I truly appreciate it , do you have the data set (excel will do) to play with ? Income , age to my knowledge is must have to variables isn’t ? Please correct me . Curious to learn

Thanks

LikeLike

Hi Vik, the data was downloaded directly from the US Home Mortgage Disclosure Act data – we were using actual data to build the model. Google it up and you will be able to find various sites that lets you download it.

LikeLike